Web scraping has become an essential way for businesses and developers to collect data online. It can be used for things like price monitoring, competitor analysis, lead generation, market research, AI training, and so much more.

But scraping websites today is not as easy as it used to be. Many websites now rely on heavy JavaScript, dynamic content, fingerprinting systems, IP rate limits, and extremely advanced anti-bot protection like Cloudflare. Because of this, simple scraping scripts often fail.

That’s why people started looking for a good web scraping tool or a data extraction API that can help handle the technical challenges like proxy rotation, browser rendering, and blocking, so you can just focus on collecting the data you need.

Below, we will discuss five of the best web scraping tools available today that can help you simplify your data collection processes.

Scrapfly is a developer-focused web scraping platform built for reliable and scalable data collection. It offers different web scraping tools and a complete Web Scraping API designed to help you solve common scraping problems. By using Scrapfly, instead of managing proxies and headless browsers yourself, you can simply send a request and let it handle the rest.

Advanced Anti-Blocking Protection

Scrapfly includes built-in protection against common anti-bot systems. It can handle JavaScript challenges and blocking mechanisms automatically. By doing this, it improves scraping success rates compared to more basic scraping methods.

Cloud Browser Rendering

For websites that rely heavily on JavaScript, Scrapfly provides cloud-based browser rendering. This will allow you to scrape dynamic websites without having to run your own headless browser.

Automatic Proxy Rotation

Scrapfly manages proxy rotation internally, including residential proxies. It also supports geo-targeting, which can be very useful in case you need to access content from specific countries or regions.

Developer SDKs and Integrations

The platform provides official SDKs for Python and TypeScript, along with Scrapy integration. It also connects with tools like Zapier, Make, n8n, and AI frameworks such as LangChain and LlamaIndex.

Monitoring and Debugging Dashboard

Scrapfly also includes a web dashboard where you can monitor requests, debug issues, and replay API calls. Having this dashboard can make troubleshooting issues much easier and improve your overall performance.

Bright Data is one of the most well-known companies in the web data industry. It provides scraping tools along with one of the largest proxy networks available.

Web Scraper API

Bright Data offers an API that handles proxy rotation, CAPTCHA solving, and browser rendering. This can help reduce the technical work needed for scraping.

Large Proxy Network

It is also known for its residential, ISP, mobile, and datacenter proxies. All of these can be very useful when it comes to accessing any region-specific content and reducing blocks.

JavaScript Rendering

The platform also supports browser rendering, which allows scraping of dynamic websites that load content through JavaScript.

Enterprise-Scale Infrastructure

Bright Data is built for large-scale data collection, and it is commonly used for things like price tracking, travel monitoring, and market research.

Compliance Focus

One of the most important things is that Bright Data emphasizes compliance and responsible data collection, which is crucial for enterprise users.

Zyte, formerly known as Scrapinghub, has been part of the web scraping industry for many years. It provides scraping tools and automated data extraction services.

Unified Scraping API

Zyte offers a single API that manages IP rotation, blocking, and browser rendering. All of this can simplify your scraping workflows significantly.

Automatic Proxy Management

Similar to the other tools, this platform also handles proxy rotation internally to reduce blocking issues.

Browser Automation Support

It also offers support for headless browser automation, which makes scraping JavaScript-heavy websites easier.

AI-Based Data Extraction

Zyte includes automated extraction tools that help structure scraped data, which can help reduce the need for manual parsing.

Scalable Infrastructure

Zyte is designed for high-volume data collection, which is why it’s usually used by companies that need very stable data pipelines.

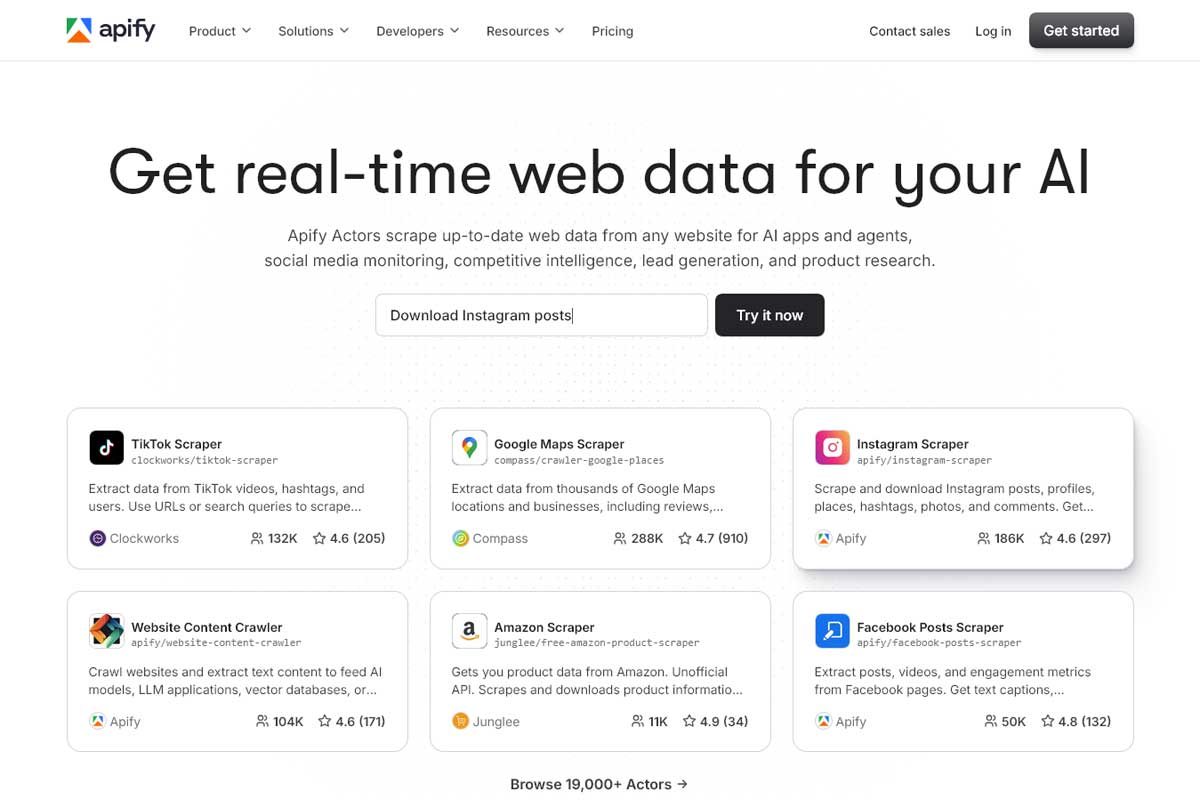

Apify is a web scraping and automation platform that provides both ready-made scrapers and a developer platform for building custom scraping tools.

Actor-Based Platform

Apify uses a system called “Actors,” which are serverless programs that can perform scraping or automation tasks. Developers can build and deploy their own Actors or even use pre-built ones from the Apify Store.

Large Marketplace of Ready-Made Scrapers

Their Apify Store includes pre-built scrapers for platforms such as Google Maps, Instagram, Amazon, and more. This can be very helpful for users who do not want to build their own scrapers from scratch.

Proxy Integration

Apify also provides its own proxy service, which includes residential proxies that can easily be integrated into scraping workflows.

Cloud Infrastructure

The platform handles scheduling, scaling, and running scraping jobs in the cloud. This makes it suitable especially for recurring data collection tasks.

Developer-Friendly Environment

It also supports Node.js and provides SDKs and CLI tools for building and managing scrapers efficiently.

Octoparse is a no-code web scraping tool designed primarily for non-technical users. It allows users to collect data without writing code.

Visual Point-and-Click Interface

Octoparse provides a visual interface where users can select elements on a webpage to extract data. This interface makes it accessible even for beginners or business users.

Cloud Extraction

With Octoparse, users can also run scraping tasks in the cloud, which removes the need to keep a local computer running.

Template Library

It also offers pre-built scraping templates for popular websites. This can help speed up setup for common use cases.

IP Rotation Support

This platform, just like the other ones we discussed, also includes proxy options to reduce blocking during scraping tasks.

Export Options

By using Octoparse, you can export the scraped data in different formats such as CSV, Excel, or API integrations.

NodeMaven Scraping Browser is a cloud-based solution designed for scalable web scraping and automation. Instead of managing local browsers and infrastructure, users can run browser sessions remotely and control them through familiar tools like Puppeteer and Selenium. This simplifies setup and allows teams to focus on data collection rather than maintenance.

Cloud Browser Execution

NodeMaven runs browser sessions in the cloud, removing the need for local resources. This allows users to launch and manage multiple sessions at scale without performance limitations from their own devices.

Integration with Automation Tools

The browser connects directly with Puppeteer and Selenium via a simple endpoint. This makes it easy to integrate into existing scraping workflows and automate tasks programmatically.

Proxy Integration

NodeMaven Scraping Browser works with residential and mobile proxies, enabling flexible geo-targeting and stable connections across different regions. This helps maintain consistent access when scraping various targets.

Scalable Session Management

Users can run multiple browser sessions in parallel, making it suitable for large-scale data collection and automation tasks.

Simple Setup and Usage

The setup process is straightforward. Users configure browser settings, connect via API, and start running sessions without managing complex infrastructure.

Web scraping today is more complex than ever. Websites now use advanced protection systems and dynamic content, which makes simple scraping methods unreliable and sometimes ineffective.

Each tool we discussed serves a different type of user. However, the best option for your specific needs depends on your technical skills, project size, and even scaling needs. By picking the right web scraping tool, you can completely transform your data collection processes and improve your performance.

Discover our other works at the following sites:

© 2026 Danetsoft. Powered by HTMLy