Here is something most SaaS founders won't admit out loud: the product that got you your first thousand customers probably isn't the product that gets you to ten thousand. Not because you built the wrong thing, but because the bar keeps moving, and it has moved again.

Think about what SaaS actually meant when it took off. The whole promise was operational, not intelligent. You weren't selling a product that thought or adapted. You were selling access. Subscription over licence fee. Browser over installation. Automatic updates over CD shipments. That was a genuinely big deal at the time, and it worked because the alternative, buying and maintaining software on-premise, was genuinely painful.

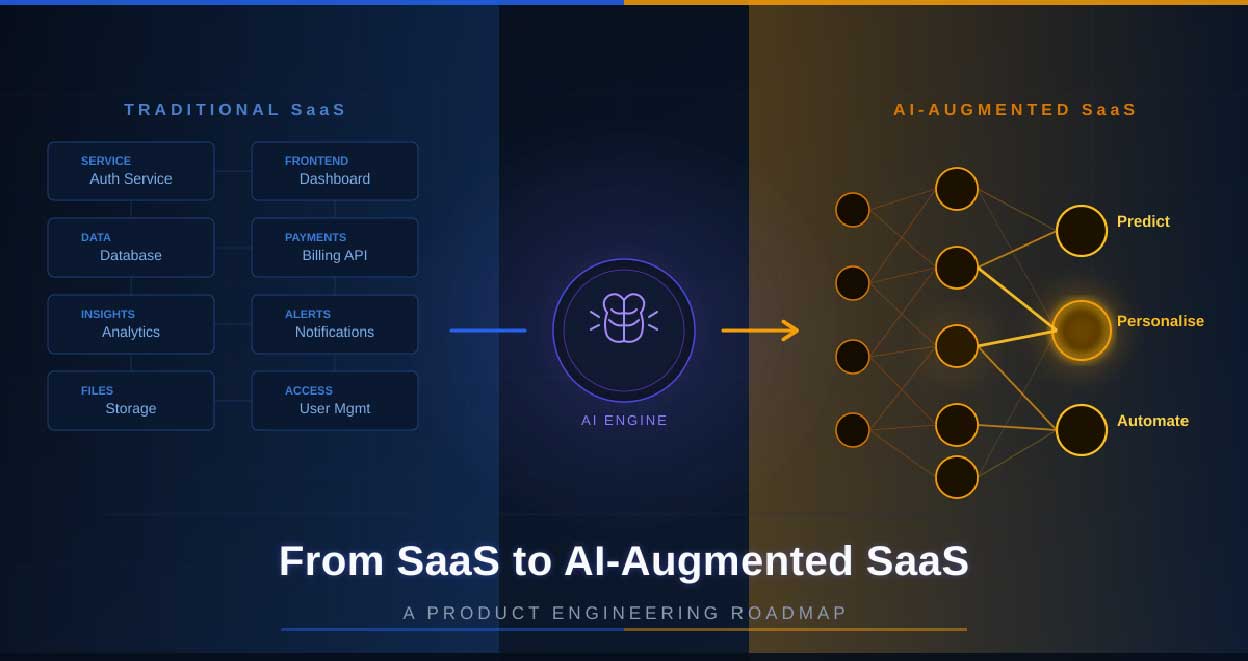

But that gap has closed. Most businesses now run entirely on SaaS tools, and the novelty of 'it just works in the browser' stopped being a selling point years ago. What users want now is different. They want software that does more of the thinking for them. And the SaaS products that are winning market share right now, the ones with the best retention numbers and the lowest churn, are almost all doing some version of AI integration. Whether they're calling it that or not.

If you're working with a SaaS development agency to build or scale your product and AI hasn't come up seriously in your planning conversations yet, this article is worth reading before your next sprint.

Honestly, the word 'benefits' makes this sound more abstract than it is. Let me put it more directly: AI changes what your product can do for users in ways that rule-based software simply cannot replicate. And those differences show up pretty quickly in the numbers that actually matter.

Traditional SaaS segments users by plan. Pro users get the Pro experience. Everyone on that tier gets the same interface, the same defaults, the same workflow. AI breaks that model wide open. When the product can look at how a specific person actually uses it, what they skip, what they come back to every day, where they slow down, it can start moulding around them. Users feel that shift even when they can't put words to it. The product stops feeling like generic software and starts feeling like a tool that was built for them.

Here's a frustration I hear from SaaS users constantly: 'I can see what happened, but the product won't tell me what's about to happen.' Most SaaS dashboards are basically historical records. They tell you last month's churn rate. They don't flag the accounts most likely to churn next month. AI closes that gap. Predictive features, whether it's identifying at-risk accounts, flagging a financial pattern before it becomes a problem, or estimating inventory shortfalls three weeks out, change the relationship users have with your product. They stop using it to review the past and start relying on it to navigate what's next.

Rule-based automation is good at clicking the same button over and over. What it cannot do is exercise judgment. AI can get closer to that. Triaging a support ticket based on its actual content, not just keywords. Drafting a first-pass contract response. Routing an approval request based on what the document actually contains rather than which folder it was put in. These feel like small things individually. Across hundreds of daily user interactions, they start to add up to something users notice and rely on.

Here's the thing about feature parity: it's achievable. If you ship a new reporting module, a competitor can have something similar in production within a few months. But if your AI model is trained on two years of behavioural data that's specific to your users, nobody can just copy that. The intelligence compounds over time, and the gap widens with every passing month of usage data. That's a moat that doesn't require patents or exclusive partnerships. It just requires that you start building it early enough.

This one surprises people. The switching cost for most SaaS tools is, honestly, not that high. You export your data, you set up the new tool, you're done in a few days. But when a product has spent six months learning how a user works, when it surfaces the right information at the right time because it knows their patterns, leaving means starting over with a tool that knows nothing about them. That friction is real, and users feel it even if they never articulate it. It's a retention mechanism that doesn't require a discounted annual plan or a loyalty programme.

Integrating AI isn't only a product question. The mistake a lot of teams make is treating it like one. They hand it off to a data science team and tell the product engineers to keep doing what they're doing. That approach produces AI features that feel like a separate layer of the product rather than part of it, and users can always tell.

Here's what actually needs to change in how the engineering team works.

When a user story gets written, someone needs to be asking: is there an AI angle here? If the answer is yes, the conversation shouldn't wait until the feature is built. What data does this need? How do we know if it's working? What happens when the model gets it wrong, because it will sometimes? These questions are much cheaper to answer in planning than they are to patch in production.

The teams doing this well don't have a separate AI workstream. They have product engineers who understand enough about ML to have these conversations, and they've baked AI considerations into how they write tickets and define 'done.'

This is the one that gets skipped most often and causes the most problems later. AI models don't improve on their own. They improve when they have good data to learn from. In a product context, that means capturing what users do with AI-generated outputs. Did they accept it? Did they change it significantly? Did they ignore it entirely and do something different? None of that gets captured automatically. You have to build the instrumentation deliberately, and you have to do it before launch, not as a post-launch improvement.

Teams that skip this ship an AI feature that works reasonably well at launch and then stays exactly that way forever. Users notice the stagnation eventually.

Software product engineering has always involved testing code before it ships. AI-augmented products require testing model performance as well, which is a different kind of test. You're looking for things like model drift, performance degradation on new data distributions, and edge cases the model wasn't trained on. If a new model version starts giving noticeably worse outcomes, you want to catch that before it reaches users, not in a support ticket three days later.

The product engineering services teams handling this well have added model evaluation steps directly into their deployment pipelines. It's not an afterthought. It's a gate, the same way failing unit tests block a deploy.

This needs to be said clearly because it trips up a lot of teams. You do not need to train your own large language model to ship an AI-augmented SaaS product. For the overwhelming majority of use cases, pre-built APIs and models are more than sufficient. The decision that actually matters is how you integrate them. If you hard-code a dependency on a single AI provider, you're going to have a painful migration the next time a significantly better option becomes available. If you build modular integrations with clean interfaces, swapping out the underlying model is straightforward.

Product engineers don't need to become data scientists. But they do need to be able to have informed conversations about prompt engineering, vector search, embedding models, retrieval-augmented generation, and model evaluation. These aren't research topics anymore. They're practical skills that come up in sprint planning. A focused two-week internal learning effort, or even a handful of engineers who go deep and share what they've learned, can shift the whole team's capability faster than people expect.

Most transition roadmaps are written at an altitude where all the hard parts look manageable. Here's a ground-level version that accounts for existing codebases, messy data, and teams that are already stretched thin.

Walk through your product's core workflows and ask one question at each step: is there a decision here that data could inform instead of the user? Those are your AI insertion points. You're not looking for everywhere AI could theoretically live. You're looking for two or three places where it would noticeably change the experience for the better.

Search functionality, document summarisation, anomaly detection in usage patterns, lead scoring, and support ticket classification tend to be strong starting points. They're well-understood problems with clear evaluation criteria. You know what good output looks like, which makes it much easier to know when the model isn't performing.

Most SaaS teams, the first time they go through this exercise, find that their data is in worse shape than they thought. Schemas that evolved organically over five years and are now a mess. Events that were supposed to be logged but weren't, consistently. Labels applied by different people using different criteria. Timestamps that don't align across services.

None of that is unusual or embarrassing. But you need to find it before you build AI on top of it. AI trained on bad data does not produce cautious or uncertain outputs. It produces confidently wrong ones, and confidently wrong is harder to explain to users than obviously uncertain.

Fixing data infrastructure is slow, unglamorous work. Budget time for it.

Take the highest-value, lowest-complexity AI use case from your Phase 1 mapping and build it properly. Not a prototype. A production feature, with evaluation metrics, A/B testing, feedback capture, and a rollback plan.

The point isn't just to ship the feature. It's to put your team through the full cycle of building, deploying, and operating an AI feature in production. You will learn things during this phase that no amount of advance planning would surface. That's expected. Build in time for it.

Your SaaS application development services model may need to expand here to include model monitoring tools that integrate into your existing observability stack. There are solid options available that don't require a complete infrastructure rethink.

Once the pilot has proven the team can do this, the next investment is in infrastructure that makes subsequent AI features faster and cheaper to build than the first one. A feature store where processed data can be shared across models. A prompt library so nobody resolves the same problem independently three times. A model registry that tracks what's deployed, when, and with what performance characteristics. Shared evaluation frameworks that apply consistent quality standards.

Without this infrastructure, every new AI feature is its own expedition. With it, the second and third features get built at maybe sixty percent of the effort of the first.

From here, the roadmap becomes a practice rather than a fixed sequence. Ship new AI features behind feature flags so you can roll them out gradually and pull back quickly if something unexpected happens. Collect feedback signals aggressively, behavioural ones more than survey responses. Retrain models on fresh data as it accumulates. Track which AI features are actually moving the metrics that matter, and be willing to deprioritise the ones that aren't.

The compounding effect here is the whole point. A product that gets measurably smarter every quarter builds a kind of user trust that is genuinely difficult for a competitor to disrupt, even with a larger engineering team or a bigger marketing budget.

Choosing a development partner for something like this matters more than most people give it credit for at the start of the process. Technical capability is one filter, and it's the obvious one. But the less obvious factors end up being just as important: do they communicate clearly when things get complicated, do they push back when something is going to cause problems, and are they still reachable six months after launch when you need something fixed quickly.

Here are the companies in the UK market worth a serious look.

For businesses that need genuine end-to-end SaaS product engineering with real AI depth, Bytes Technolab is one of the stronger options available in the UK right now.

What separates them from agencies that have recently updated their website to say 'AI-powered' is that they were doing serious engineering work before the current AI wave hit. They have opinions about architecture. Their team asks the uncomfortable questions before development starts rather than discovering the problems mid-sprint. Why does this feature need to exist? What breaks when the load triples? Where is the data actually coming from, and is it reliable enough to build on?

Their technical range covers the full SaaS stack: multi-tenant architecture, API-first design, microservices, cloud infrastructure across AWS, Azure, and GCP. On the AI side, they've built real capability around LLM integration, retrieval-augmented generation, and ML-powered feature development. Not wrapper-level work.

They've shipped products across FinTech, HealthTech, HR Tech, EdTech, and eCommerce, which means for most B2B SaaS verticals, they've already worked through the specific compliance challenges, integration quirks, and user behaviour patterns that will shape your build.

UK businesses specifically get a lot of value from their approach to GDPR compliance. It's not a checklist item they tick at the end. It's something they architect around from day one. That distinction matters more than it sounds, especially when you're building AI features that touch sensitive user data.

The reason people keep coming back to Bytes Technolab isn't just the output quality. It's that they behave like a product team rather than a delivery vendor. Sprint cycles are structured, communication doesn't disappear between milestones, and when something in the brief doesn't make sense they'll tell you rather than building it anyway.

Why hire Bytes Technolab?

Full-cycle SaaS and AI integration, from discovery through post-launch support

GDPR compliance built into architecture from day one, not bolted on later

Cross-industry experience across FinTech, HealthTech, HR Tech, EdTech, and eCommerce

AI capabilities go well beyond API wrappers into real ML feature development

They function as a product partner and will push back when something needs pushing back on

Zudu suits startups and growing SMEs that want a focused, agile team without the overhead structure of a larger agency. They've built a solid track record with bespoke SaaS applications, particularly where the brief is specific and the timeline is demanding. Not the right choice for large enterprise builds, but for founder-led teams with a clear product vision, they're worth a conversation.

Intellectsoft has worked out the parts of enterprise SaaS delivery that trip up most agencies: complex third-party integrations, legacy system migrations, and the governance frameworks that large organisations actually require. Their UK presence is substantial, and they're a sensible call when the project involves untangling something complicated while simultaneously building something new.

Netguru built their reputation at the intersection of design thinking and engineering, which sounds like a tagline until you see how many SaaS products fail because somebody treated UX as a nice-to-have. They're a strong fit for growth-stage companies entering competitive markets where user experience is a primary differentiator, not a secondary consideration.

For founders who need to validate a concept before committing to a full build, Relevant Software is a pragmatic choice. They move quickly, they understand that an MVP's job is to generate learning rather than impressions, and they don't overengineer things that don't need it yet.

| Company | Strongest Suit | Ideal Client |

|---|---|---|

| Bytes Technolab | Full-cycle SaaS + AI integration | Startups to enterprise |

| Zudu | Agile bespoke SaaS builds | SMEs and founders |

| Intellectsoft | Enterprise-scale delivery | Large organisations |

| Netguru | Design-led SaaS engineering | Growth-stage companies |

| Relevant Software | MVP and rapid prototyping | Early-stage founders |

There's a version of this transition that goes badly, and it usually looks the same across companies. Someone senior gets excited about AI, announces it internally, a few engineers get pulled off their current work to spike something, it half-works in a demo, and then it quietly dies because nobody built the data infrastructure to support it or thought through what 'good output' actually looks like for real users.

The teams that do this well are not necessarily the ones with the biggest budgets or the most experienced engineers. They're the ones that start with honest answers to a few basic questions. What does our data actually look like right now? Where in the product are users making decisions that better information could improve? What would we need to build to know whether an AI feature is actually working?

Answer those questions first. Build from there. The technical challenges are real but they're solvable. The harder work is the clarity before the first line of code gets written.

And if you're bringing in a partner to help with this, whether that's a SaaS development company for the full build or product engineering services for a specific phase, hold them to that same standard of clarity. The agencies that wave away these questions in favour of getting to the build fast are the ones that create expensive problems eight months in.

The window for doing this thoughtfully, rather than reactively, is still open. But it won't stay open indefinitely.

Discover our other works at the following sites:

© 2026 Danetsoft. Powered by HTMLy